Real-World Application: Entertainment, Celebrity Deepfakes, and Financial Fraud

Written by: Ibtissam

Introduction: Deepfakes Beyond Politics

Deepfakes extend far beyond political manipulation and are now widely used in entertainment and criminal fraud. While some applications are consensual and beneficial for filmmaking and digital creativity, others are exploitative and used to harm individuals financially, emotionally, and socially. This dual nature makes deepfakes both powerful and dangerous in modern society.

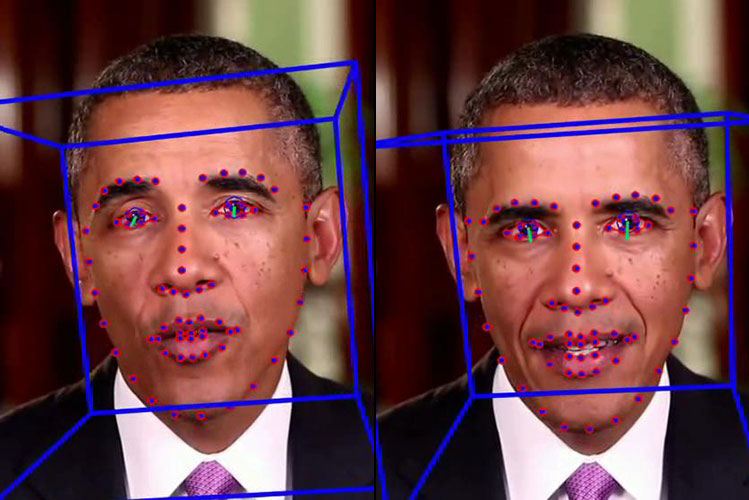

Figure 1: Example of deepfake face manipulation

Source: UC Berkeley News

Entertainment and Media Applications

In the entertainment industry, deepfake technology is used to enhance storytelling and visual effects. Films such as The Irishman used digital de-aging to make actors appear decades younger. Similarly, franchises like Star Wars digitally recreated younger versions of characters such as Princess Leia. These techniques rely on AI-based facial mapping and machine learning to create realistic performances.

However, beyond Hollywood, deepfakes have also been used in non-consensual celebrity content. AI-generated videos and images can place celebrities into situations they never participated in, including unauthorized advertisements or explicit content. These uses raise serious ethical issues regarding consent, identity ownership, and digital exploitation.

These cases matter because individuals lose control over their own likeness. Victims often experience emotional distress, reputational harm, and a permanent loss of privacy. Once created, such content spreads rapidly online and is extremely difficult to remove completely.

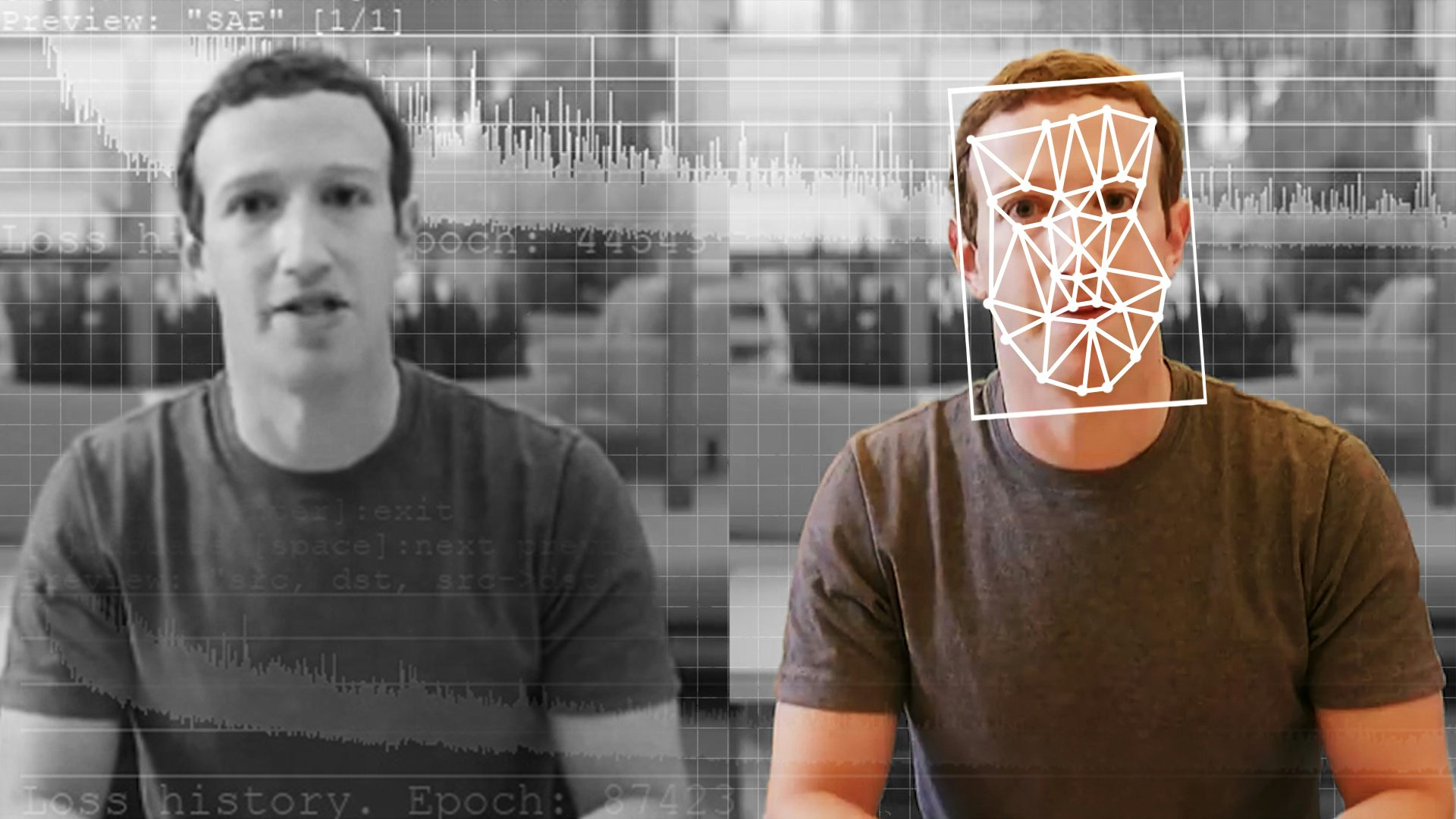

Figure 2: AI technology behind deepfake generation

Source: ncoa.org

Financial Fraud and Scam Cases

Deepfake technology is increasingly used in financial scams, especially through voice cloning. In one well-known case, criminals used AI-generated voice impersonation of a CEO to trick an employee into transferring over $243,000 to fraudulent accounts. The voice was realistic enough to bypass suspicion.

Other scams include romance fraud, where fake AI-generated faces or celebrity identities are used to build trust with victims online. Victims are manipulated into sending money under false emotional relationships. Cryptocurrency scams also use deepfake videos of celebrities falsely promoting investment platforms.

These scams are highly effective because they exploit human psychology, trust, and urgency. Victims often lose large sums of money and may feel embarrassed to report the crime, which allows scammers to continue operating without consequences.

Figure 3: Example of digital fraud and phishing tactics

Source: The Conversation

Why These Situations Are Complex and Challenging

Addressing deepfakes in entertainment is difficult due to the global nature of digital platforms. Content spreads across borders instantly, while legal systems remain slow and inconsistent. There is also a tension between creative freedom and protecting individuals from non-consensual use of their likeness.

Preventing fraud is equally challenging because attackers often operate internationally and use rapidly evolving AI tools. Many victims, especially older adults, lack awareness of these technologies. In addition, psychological manipulation techniques make scams highly convincing even to cautious users.

Social Implications

Deepfake technology challenges the foundation of trust in digital communication. When any face or voice can be artificially created, society risks entering an environment where truth becomes uncertain. This raises serious concerns about consent, identity theft, and the long-term reliability of digital media.

References

- Chesney, R., & Citron, D. (2019). Deep Fakes and the New Disinformation War. Foreign Affairs.

- BBC News. (2023). Deepfake technology in entertainment and media.

- MIT Technology Review. (2024). AI voice cloning and fraud cases.

- Wired. (2023). How deepfake scams are evolving.

- European Commission. (2023). Artificial Intelligence and Synthetic Media Risks Report.

- Wall Street Journal. (2024). AI impersonation and financial fraud cases.