Social Implications and Recommendations for Mitigating Harm

Written by: Majd Agha

Introduction: The Broader Social Impact of Deepfakes

Deepfake technology has social impacts that go far beyond one fake video or one individual victim. As synthetic media becomes more realistic, it can weaken trust in news, public institutions, and online communication. People may become unsure whether videos, audio clips, or images are real, which creates confusion in the information environment. This matters because deepfakes can affect democracy, personal safety, privacy, and the way people decide what information to believe.

Political and Social Concerns

Deepfake technology creates serious political risks by allowing the spread of false information that can influence elections and public opinion. Fake videos of politicians can be used to mislead voters, damage reputations, or create confusion during critical events. In addition, the idea of the “liar’s dividend” makes the situation worse, since real evidence can be dismissed as fake, reducing trust in governments and public institutions.

From a social perspective, deepfakes weaken trust between individuals and communities. They are often used for harassment, especially through non-consensual content that targets women and public figures. This can cause emotional harm, damage reputations, and create fear among users. As deepfakes become more common, people may begin to doubt everything they see online, which harms communication and social stability.

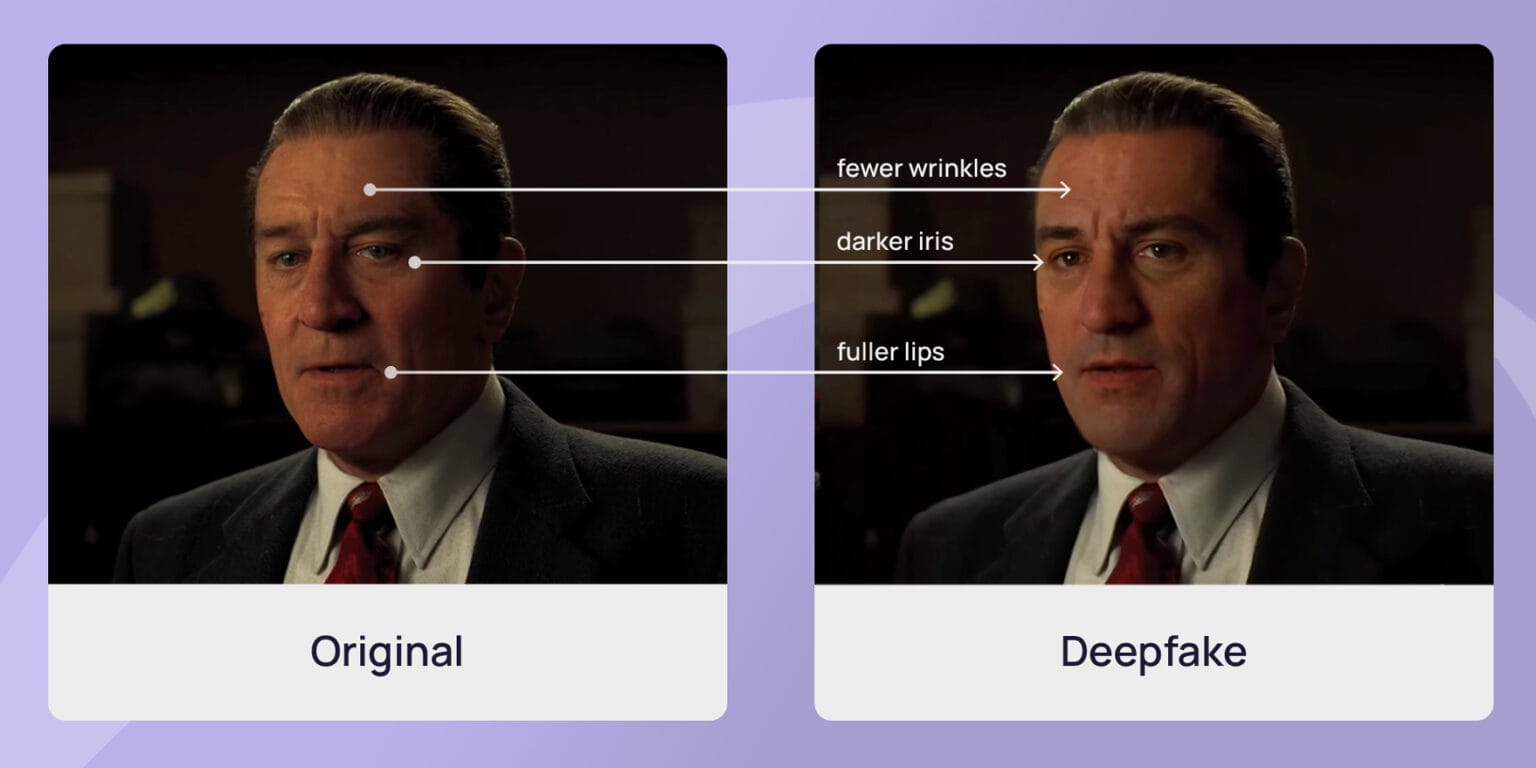

Figure 1: Example of deepfake manipulation showing visual differences between original and AI-generated content, highlighting how realistic synthetic media can be.

Source: Shamook, YouTube (2020)

Who Needs to Care: Stakeholder Analysis

Deepfake technology affects a wide range of stakeholders, each with important responsibilities. Policymakers must protect democratic processes and ensure laws address misuse. Technology companies need to develop detection tools and enforce content moderation policies. Media organizations are responsible for verifying information and maintaining public trust. Educators and researchers play a key role in promoting digital literacy and understanding emerging risks. Law enforcement agencies must handle cases of fraud, harassment, and identity misuse. Finally, everyday citizens must remain aware and critical of the content they consume, as they are directly impacted by misinformation and digital manipulation.

Recommendations for Mitigation

1. Policy and Legal Recommendations

Governments should implement clear laws that criminalize the malicious use of deepfake technology, especially in cases involving fraud, defamation, and election interference. Regulations should require labeling of synthetic media so users can distinguish between real and manipulated content. Laws such as the DEEPFAKES Accountability Act and similar global efforts aim to improve transparency and accountability. However, policymakers must also ensure that these regulations do not restrict freedom of expression or limit legitimate use of the technology.

2. Technological Solutions

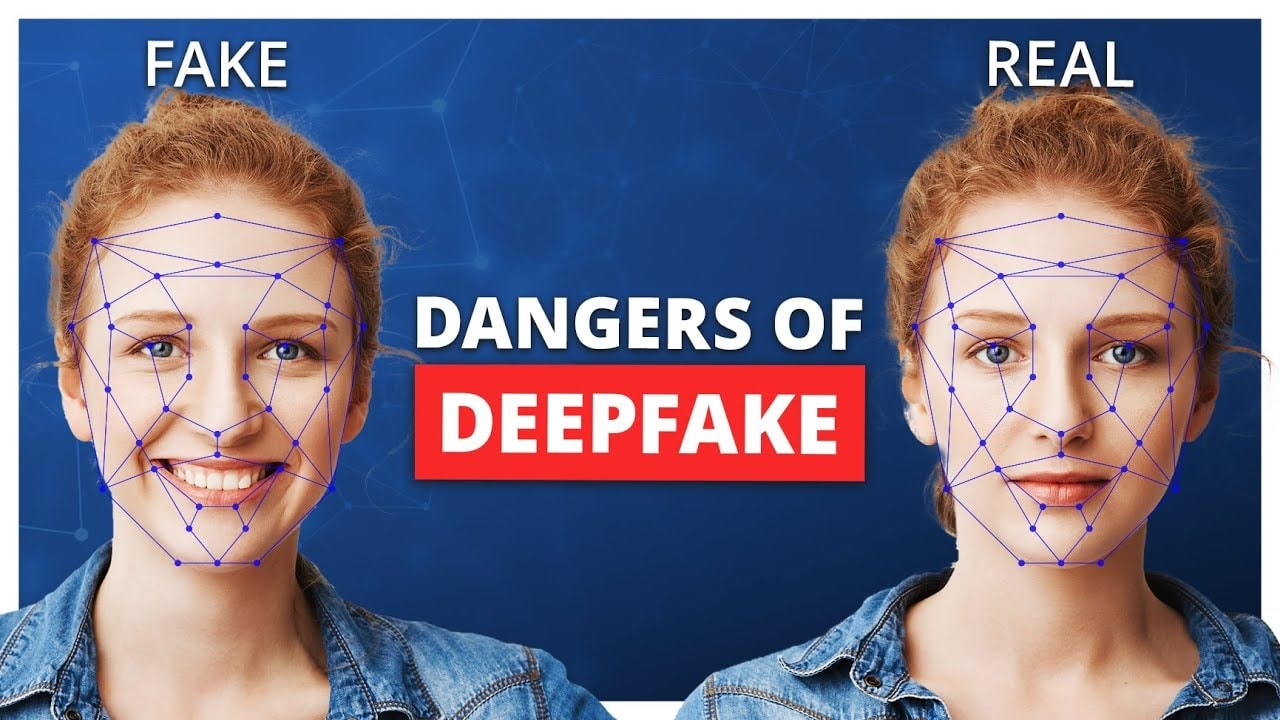

Technological solutions play an important role in reducing the risks of deepfake content. AI-based detection tools are being developed to identify manipulated videos and images, but they are still not fully reliable as deepfake technology continues to improve. Content authentication methods such as digital watermarking and standards like C2PA can help verify the origin and authenticity of media. Other approaches, including cryptographic signatures and platform-based moderation tools, also support detection and prevention. However, technology alone cannot fully solve the problem, which is why it must be combined with policy, education, and responsible platform practices.

Figure 2: AI-based facial analysis used to detect differences between real and deepfake images.

3. Platform and Industry Responsibility

Technology companies and social media platforms play a critical role in managing deepfake content. They should implement clear policies for detecting and removing harmful deepfake media, while also providing reporting tools for users. Platforms must invest in advanced detection systems and collaborate with fact-checkers and researchers to identify manipulated content quickly. In addition, they should offer support systems for victims of deepfake abuse and ensure transparency in how content is moderated, while balancing the need to protect free expression.

4. Education and Media Literacy

Education and media literacy are essential for preparing the public to deal with deepfake technology. Schools and institutions should teach individuals how to critically evaluate digital content and recognize signs of manipulation. Public awareness campaigns can help people understand the risks and avoid being misled by false information. Journalists and media professionals must also be trained to verify sources and maintain high standards of accuracy. Even as detection technologies improve, education remains crucial in building a society that is more resilient to misinformation and digital manipulation.

Figure 3: Education in classrooms plays a key role in improving digital literacy and helping students identify misinformation and deepfake content.

Future Outlook

The future of deepfake technology depends on how society chooses to respond today. If the issue is not addressed effectively, it could lead to increased misinformation, loss of trust in media, and greater exploitation of vulnerable individuals. This may weaken democratic systems and create confusion in everyday communication. However, if governments, technology companies, and society work together, it is possible to reduce these risks. With strong regulations, improved detection tools, and better education, deepfakes can be managed responsibly while still allowing beneficial uses in areas such as entertainment and education. The future is not fixed, and the actions taken now will shape how this technology impacts society.

Conclusion: A Multi-Stakeholder Approach

Addressing the challenges of deepfake technology requires a comprehensive and collaborative approach. No single solution is enough on its own, as effective responses must combine technological tools, clear policies, responsible platform practices, and strong public education. While the risks are significant, there is still an opportunity to manage this technology in a way that reduces harm and protects society. Ultimately, how we respond to deepfakes today will shape the future of trust, truth, and ethical responsibility in the digital world.

References

- Citron, R. C. a. D. (2025, March 11). Deepfakes and the new Disinformation War: The coming Age of Post-Truth Geopolitics. Foreign Affairs. https://www.foreignaffairs.com/articles/world/2018-12-11/deepfakes-and-new-disinformation-war

- EU Artificial Intelligence Act | Up-to-date developments and analyses of the EU AI Act. (n.d.). https://artificialintelligenceact.eu/

- Kietzmann, J., Gustavson School of Business, University of Victoria, Lee, L. W., Nottingham Trent University, McCarthy, I. P., Simon Fraser University, LUISS Guido Carli University, Kietzmann, T. C., Donders Institute for Brain, Cognition and Behaviour, Radbound University, & MRC Cognition and Brain Sciences Unit, Cambridge University. (2021). DeepFakes: trick or treat?

- Vaccari, C., & Chadwick, A. (2020). Deepfakes and Disinformation: Exploring the impact of synthetic political video on deception, uncertainty, and trust in news. Social Media + Society, 6(1). https://doi.org/10.1177/2056305120903408

- Merlo, K. W. S. (2024, May 9). What is a Deepfake? From research to policy and beyond. DeFake Project Blog. https://blog.defake.app/what-is-a-deepfake-from-research-to-policy-and-beyond/