Introduction to Deepfake Technology

Written by: @rs-its

What Are Deepfakes?

Deepfakes are synthetic media created using artificial intelligence to convincingly replace one person's likeness with another in video, audio, or images. The term combines "deep learning" and "fake," reflecting the AI technique used to generate these fabrications. Unlike traditional photo or video editing that requires manual manipulation, deepfake technology automates the process through machine learning algorithms that can swap faces, clone voices, and even generate entirely fabricated footage of people saying or doing things they never actually did.

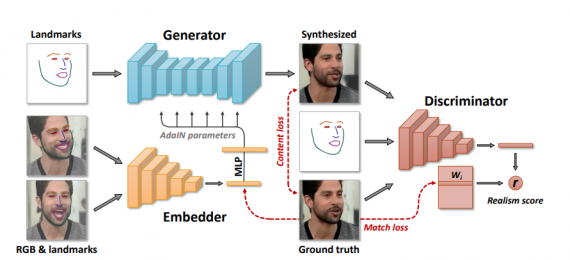

At the core of deepfake creation are neural networks called Generative Adversarial Networks, or GANs. These systems work by pitting two AI models against each other: one generates fake content while the other tries to detect it. Through thousands of iterations, the generator becomes increasingly skilled at creating realistic forgeries that can fool both the detector and human observers. What once required Hollywood lvl resources and expertise can now be accomplished with consumer grade computers and freely available software, making deepfakes accessible to virtually anyone with basic technical skills.

Figure 1: GANs diagram.

Source: Neurohive (2019)

How Deepfake Technology Works

The process begins with training data. To create a convincing deepfake of a specific person, the AI needs hundreds or thousands of images and video clips of that individual from different angles, lighting conditions, and expressions. The algorithm learns the unique patterns of their facial features, movements, and mannerisms. Once trained, the system can map these characteristics onto another person's face in a target video, adjusting for angle, lighting, and movement in real time.

Modern deepfake tools have become remarkably sophisticated. They can now replicate subtle details like skin texture, eye movements, and even the way someone's mouth moves when they speak. Voice cloning technology adds another layer of realism, using AI to synthesize speech that mimics a person's tone, accent, and speaking patterns. Some advanced systems can generate entirely fabricated videos from just text prompts or a single photograph, eliminating the need for extensive training data altogether.

Why Deepfakes Matter

Our team chose to focus on deepfake technology because it represents one of the most pressing ethical challenges at the intersection of artificial intelligence and society. In an era where "seeing is believing" has traditionally been the gold standard for truth, deepfakes fundamentally undermine our ability to trust visual evidence. This erosion of trust has far reaching consequences for democracy, journalism, law enforcement, and interpersonal relationships.

The stakes are particularly high because: Deepfakes weaponizes identity itself. Unlike traditional misinformation that can be debunked through fact checking, a well crafted deepfake carries the same power of seemingly authentic video evidence. When political leaders appear to make wild statements they never said, when celebrities are inserted into fake scenarios without consent, or when ordinary people find their faces put into compromising situations, the damage to reputation and trust can be fast and devastating.

The technology's rapid adoptation makes it even more concerning. What required specialized expertise and expensive equipment just a few years ago can now be accomplished with smartphone apps. This accessibility means deepfakes are no longer limited to state actors or well funded organizations but they're tools available to anyone with a grievance, political agenda, or malicious intent. At the same time, detection methods struggle to keep pace with generation techniques, creating an asymmetric battle where creating convincing fakes is often easier than identifying them.

Beyond the immediate harms, deepfakes force us to confront deeper questions about the nature of truth in digital society. If we can no longer trust what we see and hear, how do we distinguish fact from fiction? What happens to accountability when anyone can plausibly deny authentic evidence by claiming it's a deepfake? How do we protect vulnerable individuals from having their identity hijacked for malicious purposes? These aren't just technical problems but also they're fundamental challenges to how we organize democratic society, establish trust, and maintain social cohesion.

The Evolution of Deepfakes

The technology emerged in academic research labs in the early 2010s, with researchers developing GANs to advance computer vision and image generation. By 2017, deepfake tools had migrated to online forums where they were quickly weaponized to create non consensual explicit videos. Since then, the technology has evolved rapidly. High profile examples include fabricated videos of political leaders, celebrity hoaxes, and sophisticated financial fraud schemes using cloned voices to impersonate executives.

Today, we're witnessing an arms race between creation and detection. Tech companies, researchers, and governments are racing to develop tools that can identify synthetic media, while simultaneously, deepfake generation techniques become more advanced and harder to detect. This ongoing battle shapes how we must approach the ethical, legal, and technical challenges posed by this transformative technology in this rapidly evolving world.

Figure 2: Different types of deepfakes.

Source: Macul (2025)

References

- Chesney, R., & Citron, D. (2019). Deep fakes: A looming challenge for privacy, democracy, and national security. California Law Review, 107(6), 1753-1820. https://doi.org/10.15779/Z38RV0D15J

- Goodfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Courville, A., & Bengio, Y. (2014). Generative Adversarial Nets. https://proceedings.neurips.cc/paper_files/paper/2014/file/f033e10dbe25-Paper.pdf

- Kietzmann, J., Lee, L. W., McCarthy, I. P., & Kietzmann, T. C. (2020). Deepfakes: Trick or treat? Business Horizons, 63(2), 135-146. https://doi.org/10.1016/j.bushor.2019.11.006

- Westerlund, M. (2019). The emergence of deepfake technology: A review. Technology Innovation Management Review, 9(11), 39-52. https://doi.org/10.22215/timreview/1282

- Vaccari, C., & Chadwick, A. (2020). Deepfakes and disinformation: Exploring the impact of synthetic political video on deception, uncertainty, and trust in news. Social Media + Society, 6(1). https://doi.org/10.1177/2056305120903408

- Paul, Olympia A., "Deepfakes Generated by Generative Adversarial Networks" (2021). Honors College Theses, 671. https://digitalcommons.georgiasouthern.edu/honors-theses/671